MongoDB Cursors with PHP

Recently I was asked to improve the MongoCursor::batchSize documentation. This began an indepth investigation in how the PHP driver for MongoDB handles pulling data that's been queried from the MongoDB server. Here are my findings.

A MongoCursor is created as soon as you run the find() method on a MongoCollection object, like in:

$m = new Mongo(); $collection = $m->demoDb->demoCollection; $cursor = $collection->find();

Just calling find() will only create a cursor object, and does not immediately send the query to the server for processing. That is only done as soon as you start reading from the cursor for the first time. Because of this, you can call additional methods on the newly created cursor object that still influence how the query is run on the server. One of such examples is the sort() method that makes the result sort according to its arguments (in this example, by name):

$cursor->sort( array( 'name' => 1 ) ); $result = $cursor->getNext();

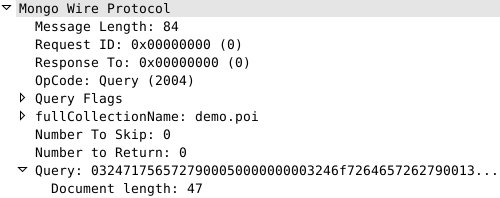

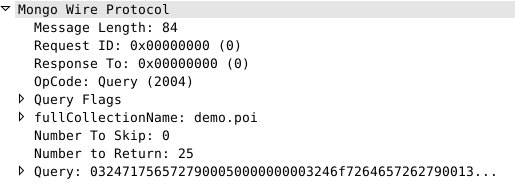

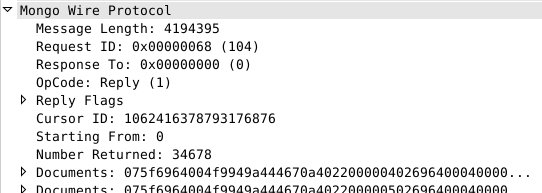

When you then call getNext() on $cursor the driver sends to the server the query, and requests to return a default number of documents in the first batch. The default Batch Size is 101. Let's have a look on what's get send on the wire in our simple query for all documents, sorted by name:

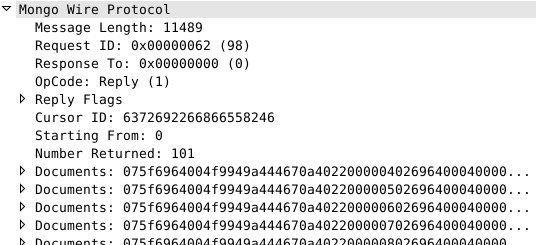

The Number to Return is 0, which means to use the default. So even although we only want to fetch one result (getNext() asks the cursor for the next document only), the server returns 101 documents:

The driver stores all 101 documents locally and during the next 100 calls to getNext() the driver will simply return the documents from the local memory. Once getNext() gets called for the 102th time, the driver connects back to the server to request more documents:

// skip the other 100 docs

for ($i = 0; $i < 100; $i++) { $cursor->getNext(); }

// request document 102:

$result = $cursor->getNext();

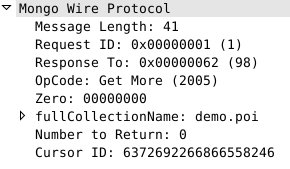

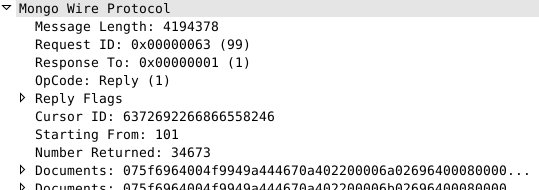

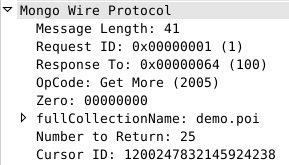

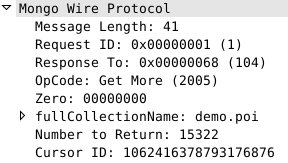

When the driver asks for more documents separately (i.e., not at the same time it is issuing a query) without a specific batch size, the server fills up 4MB of documents. On the wire, the request for Get More looks like:

and the reply like:

As you can see, the returned data is 4194378 bytes, and the Number Returned is 34673.

Setting your own batch size

You can instruct the driver to use different batch sizes, by using the batchSize() method on the $cursor. In this new example, we use the batchSize() method to request 25 documents per round trip to the server:

$cursor = $collection->find()->sort( array( 'name' => 1 ) ); $cursor->batchSize(25); $result = $cursor->getNext();

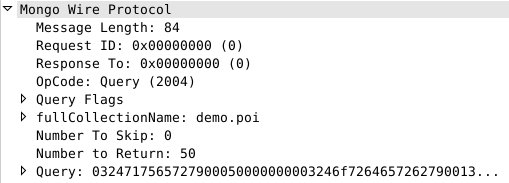

When we run this script, we will see the following on the wire:

As expected, the Number to Return is now 25. During iteration, all query results are returned from the server to the driver in batches of 25 documents:

// retrieve another 25 documents to trigger the getMore

for ($i = 0; $i < 25; $i++) { $cursor->getNext(); }

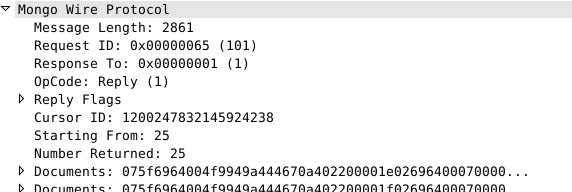

Which creates this query:

And this reply:

As you can see, another 25 documents are returned, starting from the 25th document.

Using limit

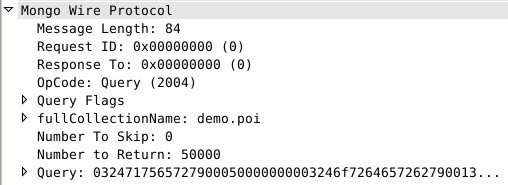

Besides batchSize(), the driver also supports limit(). limit() is something that exclusively happens on the client side. It is just a counter that restricts how often the cursor can iterate over the data. However, the driver also uses the value passed to the function to ask for only the amount of documents that it still needs to fetch. This means that if we run this script, the driver will request 50000 documents:

$cursor = $c->find()->sort( array( 'name' => 1 ) ); $cursor->limit( 50000 ); $res = $cursor->getNext();

On the wire we'll see:

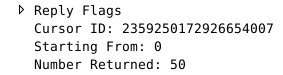

Sadly, not all 50000 documents fit in the first reply (as every reply is limited to 4MB) and the server replies that it has only returned 34678 documents:

The driver now calculates how many documents it still needs to fullfill it's limit of 50000: 50000 - 34678 = 15322. It then requests those images with a Get More query:

It is also possible to combine limit() and batchSize().

Combining limit and batchSize

If we just use limit() then the driver will always try to fetch as many documents it can to full-fill the limit. This sometimes means it will fill up all 4MB of the maximum allowed reply packet, especially if your limit is set high enough (in our example, that's more than 34678 documents). That's not often what you want, and you can use a combination of limit() and batchSize() to fix it. If we want to query at most 128 documents with at most 50 documents per batch, we can specify that as:

$cursor = $c->find()->sort( array( 'name' => 1 ) );

$cursor->limit( 128 )->batchSize( 50 );

$res = $cursor->getNext();

// retrieve the other 127 documents that we still want

for ($i = 0; $i < 127; $i++) { $cursor->getNext(); }

On the wire, we'll see the following exchange:

Initial query:

First 50 documents:

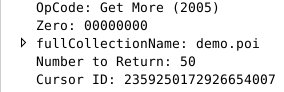

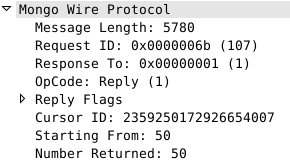

First Get More for the second batch of 50:

The second batch of 50 documents:

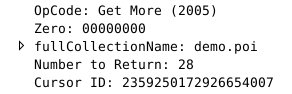

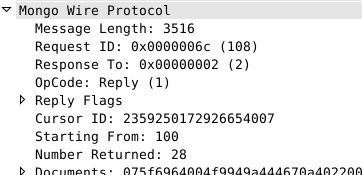

The second and last Get More for a batch of 28 (128 - 50 - 50 = 28):

And the last batch of 28 documents returned:

I wouldn't quite suggest you use a small batch such as 50 though as it would incur lots of round trips from and to the server. In some cases a small batch size makes sense.

Take for example a situation where you need to process 100.000s of documents and the processing time for each item is 2 seconds. In the first batch, the driver will pull 101 documents, which in total will take 202 seconds. When the driver attempts to fetch the next batch with Get More, it fails because the default cursor time-out (on the client size) is only 30 seconds. Setting your batch size to 5 in this case avoids your cursor from timing out. You can of course also change the cursor timeout. Do not use the immortal flag though, as that means something else (see NoCursorTimeout at the MongoDB Wire Protocol documentation).

One More Thing

Setting the batch size to 1, as well as setting a negative batch size has a special meaning for the MongoDB server. In both cases, it instructs the server to return up to the absolute value of the requested and then terminate the cursor, allowing no further documents to be fetched. That means that this script doesn't do what you expect it to:

$cursor = $c->find()->sort( array( 'name' => 1 ) ); $cursor->batchSize( 1 )->limit( 10 ); $cursor->getNext(); var_dump( $cursor->getNext() );

The var_dump() for the second getNext() will always return NULL. However, if you set a batch size of 2, it works just like you would expect:

$cursor = $c->find()->sort( array( 'name' => 1 ) ); $cursor->batchSize( 2 )->limit( 10 ); $cursor->getNext(); // item 1 $cursor->getNext(); // item 2 var_dump( $cursor->getNext() ); // item 3

Now let's set a batch size of -2 and see what happens on the wire. The request is:

As you can see, the driver really sends -2 to the server, indicating that negative batch sizes are handled on the server side.

And the reply is:

The Cursor ID is 0 in the reply, meaning that there is no cursor to fetch further documents.

We have now come to the end of the article on MongoDB's cursors. All the screenshots of the packets on the wire were made with WireShark, which has support for the MongoDB wire protocol. You can read more about the wire protocol at http://www.mongodb.org/display/DOCS/Mongo+Wire+Protocol; but this is often not something you should have to be concerned about. Just to sum things up:

-

the Batch Size controls how many documents the server sends in a reply packet.

-

the server will never send more documents than fit in 4MB.

-

the Limit option controls how many documents the driver will (try to) fetch from the server.

-

by default the first batch of documents without any limits specified is 101 documents.

-

a negative Batch Size or a Batch Size of 1, will terminate the cursor immediately after returning the next batch of documents.

Comments

Awesome tutorial, really love MongoDB for all it's features!

Will be giving this a more detailed look and I'll be seeing how I can integrate it with my MongoDB app Thundergallery (http://thundergallery.fusionstrike.com/)

Hi, how did you get the Wire Protocol outup?

@Rafael: I used wireshark for that.

Can I just validate my understanding of batch sizes and limits with you. Is the following sentence accurate?

"Using limit will automatically make the batch size obsolete unless you explicitly set a batch size"

Thanks!

Life Line

Created 2 waste_baskets; Updated 2 bus_stops and 2 crossings

I hate this timeline.

For @fridaynightdinners I wanted to look up what the difference between Raviolo and Girasolo is.

DuckDuckGo's (non-ai variant) top three results are all AI generated content with AI generated author images, bio, and "flair".

I want stuff written by *humans*, not this AI slop BS.

Created 3 waste_baskets; Updated a waste_basket

Updated 6 crossings

Northern Lapwing On The Move

This dapper bird is having a stroll looking for lunch. I like the iridescence in its wings.

#BirdPhotography #BirdsOfFediverse #Nature #Photography #London #BirdsOfMastodon

Created a vending_machine

Updated a bus_stop

I hiked 5.4km in 2h35m46s

I walked 2.2km in 27m13s

I walked 1.6km in 32m29s

I walked 3.3km in 34m33s

Updated a confectionery shop, a massage shop, and 2 other objects; Deleted a books shop

I hiked 7.0km in 4h21m00s

Updated a deli shop and a pet_grooming shop

I walked 4.2km in 49m42s

I walked 1.4km in 10m14s

I walked 2.2km in 1h43m13s

I walked 4.4km in 1h25m00s

Updated a cafe

Updated a bar

I walked 1.7km in 19m07s

I got a new lens. It's a little bit larger, and loads heavier, than my older one.

I walked 1.6km in 15m10s

Shortlink

This article has a short URL available: https://drck.me/mongocur-9f8